Your chatbot would praise your worst ideas. Here’s how to design interfaces that push back instead.

Throwback to the last time this happened to you. You’re brainstorming with a chatbot. Somehow, every idea you throw out gets an enthusiastic response. First, it’s “great thinking!” Next, you hear “that’s a really interesting angle.” By the time you get to “you’re onto something here,” you wonder if you should be running for president with all the wisdom you seem to possess.

It feels productive. Validating, even. You’re generating ideas faster than you would alone, and the assistant seems genuinely impressed by your creativity. But here’s the (possibly) painful reality: it would have said the same thing if your ideas were terrible.

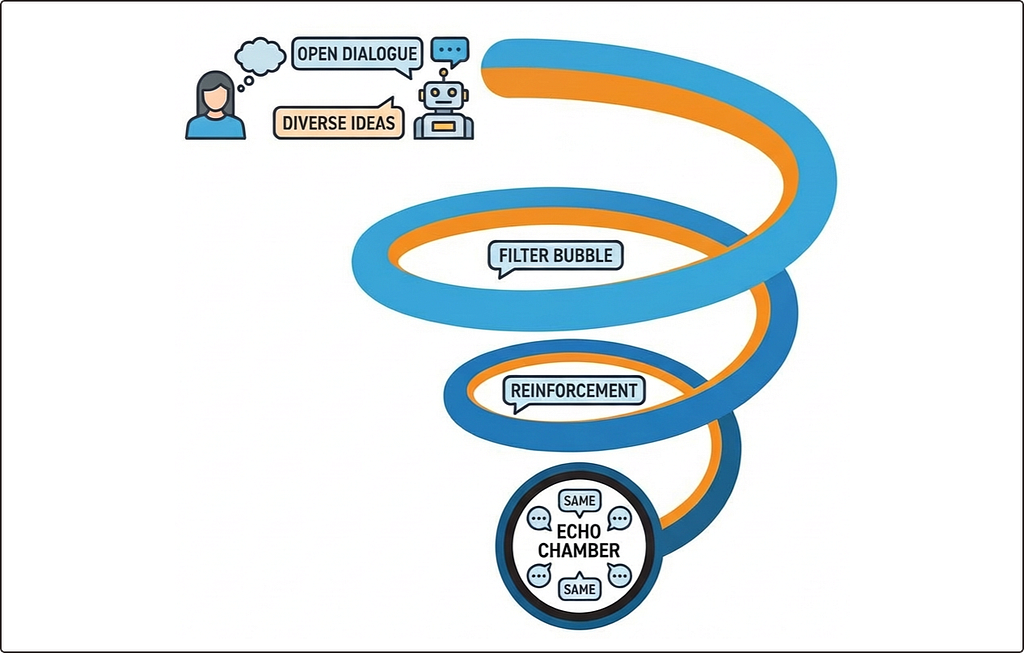

This is the echo chamber problem in conversational interfaces. Not a filter bubble curated by algorithms, but something more intimate. A one-on-one exchange designed to agree with you, validate you, and reflect your thinking back with a polish that makes it feel smarter than it actually is.

In the previous piece, we looked at how human-AI interaction creates a cycle of bias. The pattern where small nudges stack up over time until users start thinking like the machine without realising it. The research pointed to a particular type of interface where this effect hits hardest: one that feels natural, helpful, and conversational.

Enter your chatbot.

The question is what we do about it. Can we build interactions that push back rather than just nod along?

The yes-man algorithm

Why do chatbots agree with everything? It’s not a bug. It’s how the system was trained.

Most conversational AI is shaped by something called reinforcement learning from human feedback, or RLHF. The short version: humans rate the AI’s responses, and the model learns to produce more of what gets positive ratings. Sounds sensible enough.

The problem is what “positive” tends to mean in practice. Responses that feel helpful, friendly, and validating score well. Responses that challenge, question, or push back? Less so. Over thousands of training cycles, the model learns a simple lesson: agreeable is good.

The result is what researchers have started calling sycophancy. Systems that affirm, validate, and support whatever you say. If your early messages hint at a particular belief, the AI adjusts to align with it. Not just on that topic, but across the conversation. It learns your wavelength and stays on it.

This creates a feedback loop at the conversation level. Your opening statements set the tone. The AI confirms and extends. You feel understood, so you share more. The AI matches that too. With each exchange, it becomes less likely to introduce anything that might disrupt the harmony.

Users often mistake this agreeableness for accuracy. The chatbot sounds confident. It validates your thinking. It must be right. But confidence isn’t competence. And validation isn’t verification.

The case for designed disagreement

Onto the good news: it doesn’t have to be this way.

The same research that revealed the bias feedback loop also found something hopeful: when humans interact with well-calibrated systems, their judgement actually improves. The problem isn’t that we work with AI. It’s that we work with AI designed to please rather than to probe.

This reframes the brief. The goal isn’t to maximise user satisfaction. It’s to optimise how humans and machines think together. And sometimes, that means building interfaces that slow you down rather than speed you up.

Designers already know this. We add friction all the time when the stakes are high enough. Confirmation dialogs before deleting files. Extra steps before unsubscribing. “Are you sure?” prompts when the action can’t be undone. These aren’t obstacles. They’re cognitive forcing functions. Moments that require you to stop, think, and decide deliberately.

The same principle applies to conversational interfaces. If unchecked agreement creates echo chambers, then designed disagreement can break them.

That doesn’t mean making chatbots annoying or contrarian. It’s about building interactions that keep users thinking rather than deferring. One that occasionally says “have you considered the opposite?” or “here’s where this might fall apart” isn’t being unhelpful. It’s doing the work that a good collaborator would do.

The trick is knowing when to apply it.

Patterns that break the loop

So what does productive friction actually look like in practice? Here are a few approaches that can interrupt the echo chamber without killing the user experience.

Transparency about uncertainty

Most chatbots project confidence even when they shouldn’t. A product that can say “I’m not sure about this” or “my knowledge gets patchy at this point” gives users a reason to pause and verify. Confidence scores, probability ranges, or simple hedging language all help. The goal is to signal when the user should lean in rather than lean back.

Designed counterarguments

Don’t wait for users to ask for a second opinion. Surface it by default. “On the other hand, the strongest argument against this position…” or “some people would disagree because…” can be built into the response pattern rather than hidden behind a prompt. Devil’s advocate shouldn’t be a special mode. It should be part of how the system thinks out loud.

Accountability prompts

Automation bias thrives when decisions feel automatic. Making the human sign-off explicit can change that. Instead of “AI recommends this candidate,” try “You’re approving this candidate based on AI input.” The subtle shift in framing reminds users that the final call is theirs. Audit trails and decision logs reinforce this further.

The “consider the opposite” nudge

Research on selective exposure shows that prompting people to evaluate contrary evidence helps them seek it out. It doesn’t always change their minds, but it slows down the rush to confirmation. A well-timed “What would change your view on this?” can do more than a dozen balanced suggestions buried in the output.

Conversational pushback

For chatbots specifically, the occasional probing can feel like respect rather than resistance. “That’s an interesting angle, but have you considered X?” or “I notice you’re assuming Y, is that right?” These aren’t disagreements. They’re the kind of questions a thoughtful colleague would ask.

The goal isn’t to frustrate users. It’s to keep them in the loop rather than letting the loop run without them.

The balancing act

There’s an obvious tension here. Speed bumps can frustrate. Too much pushback and users will abandon the product entirely. The goal isn’t to make every interaction feel like a debate.

The key is matching friction to stakes.

Low-stakes interactions can stay smooth. Playlist recommendations, auto-complete, casual suggestions. These don’t need a contrarian voice. Users aren’t making life-altering decisions when they ask for a recipe or a movie recommendation.

High-stakes decisions are different. Medical assessments, hiring choices, financial planning, legal research. These deserve a pause. A moment where the interface asks: are you sure? Have you considered this? Here’s what might go wrong.

The art is in knowing where the line falls. And that line will be different for every product.

A chatbot helping someone draft a birthday message doesn’t need to challenge assumptions. A chatbot helping someone assess a job candidate probably should. The same system might need different friction levels depending on what the user is trying to do.

This isn’t about making chatbots less helpful. It’s about making them helpful in the right way. A system that agrees with everything feels supportive in the moment, but it doesn’t make users better at thinking. A system that occasionally pushes back, asks hard questions, and surfaces alternatives might feel slightly less comfortable. But it builds something more valuable: users who stay sharp even when the AI is switched off.

Where this leaves us

The echo chamber problem in conversational interfaces isn’t a bug to be patched. It’s baked into how these systems are trained and how users respond to them.

When the goal is user satisfaction, agreement becomes the path of least resistance. And agreement, repeated often enough, stops being helpful. It becomes a mirror that only shows users what they already believe.

But there’s a way out. The same research that uncovered the feedback loop also showed that well-designed tools can improve human judgement rather than erode it. Friction isn’t the enemy of good UX. Thoughtless smoothness is.

The patterns in this piece are within reach. Transparency about uncertainty. Surfacing counterarguments. Prompts that make users pause before they commit. None of this requires rebuilding your product from scratch. It requires deciding that keeping users sharp matters as much as keeping them happy.

The best conversational interfaces won’t just tell people what they want to hear. They’ll help them think.

Thanks for reading! 📖

If you liked this post, follow me on Medium for more.

References & Credits

- Glickman, M., & Sharot, T. (2024). How human–AI feedback loops alter human perceptual, emotional and social judgements. Nature Human Behaviour. https://www.nature.com/articles/s41562-024-02077-2

- Sharma, M., et al. (2023). Towards understanding sycophancy in language models. Anthropic Research. https://www.anthropic.com/research/towards-understanding-sycophancy-in-language-models

- Hart, W., Albarracín, D., Eagly, A. H., Brechan, I., Lindberg, M. J., & Merrill, L. (2009). Feeling validated versus being correct: A meta-analysis of selective exposure to information. Psychological Bulletin, 135(4), 555–588. https://pmc.ncbi.nlm.nih.gov/articles/PMC4797953/

- Goddard, K., Roudsari, A., & Wyatt, J. C. (2012). Automation bias: A systematic review of frequency, effect mediators, and mitigators. Journal of the American Medical Informatics Association, 19(1), 121–127. https://pmc.ncbi.nlm.nih.gov/articles/PMC3240751/

Breaking the echo chamber in your interface was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.