Human-Centred Design has grown up. It’s time we did too

We spent twenty-five years making technology work for users. Now we need to make it work for human beings. These are not the same thing, and the distance between them is where a generation of harm accumulated.

In the year 2000, Steve Krug published three words that became the operating philosophy of a generation of designers: Don’t Make Me Think. The message was radical in its simplicity — technology should work for people, not the other way around. Usability was the revelation. Friction was the enemy. If a user could not complete a task, the product had failed, full stop. Krug gave practitioners the language. Don Norman had already given them the philosophy, twelve years earlier in The Design of Everyday Things — the foundational argument that designed objects should respect the cognitive reality of the human beings using them. Together, these ideas seeded an entire discipline.

It was the right idea at the right moment. And it changed everything.

I remember that moment clearly. I was starting my career in Italy, working on some of the first accessible digital banking interfaces in the country. The conversation was about the information superhighway and its extraordinary promises. Technology as liberation. Technology as the great equaliser. McLuhan’s dream of technology as an extension of our human capacities, finally becoming real.

Twenty-five years later, how did that dream turn out?

We did not build the information superhighway. We built an attention economy

The usability movement succeeded. Brilliantly. Interfaces became intuitive. Friction disappeared. Onboarding flows became seamless. Checkout processes became invisible. And as the friction between humans and technology dissolved, something else happened.

We became the product.

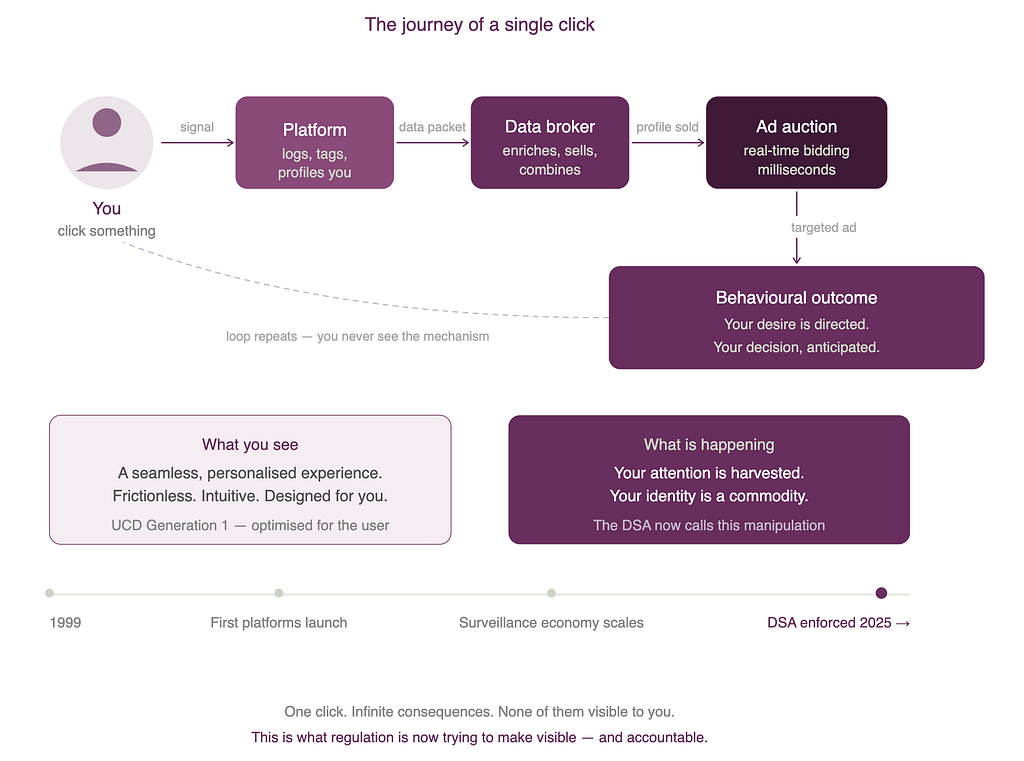

Not metaphorically. Structurally. Architecturally. Our attention became a commodity. Our data became a strategic asset. Our decision-making became the territory over which platforms competed. The seamless experience we had worked so hard to create was not neutral. It was extractive. Every frictionless scroll, every perfectly timed notification, every interface optimised for engagement rather than wellbeing, was a carefully engineered mechanism for capturing something from us.

Umberto Eco saw it coming. In a 2015 lecture at the University of Turin, he warned that social media had given “legions of idiots” the same reach once reserved for more accountable public speech. He was not attacking free speech. He was describing the collapse of mediation: the erosion of editorial judgment, the flattening of expertise, the algorithmic equivalence of radically unequal claims. The Nobel Prize winner and the conspiracy theorist, side by side, algorithmically indistinguishable.

We told ourselves we were building a democratic, pluralistic information society. What we actually built was an attention economy powered by behavioural prediction and manipulation. And we did it with good UX.

The Romans understood the mechanism perfectly: panem et circenses. Bread and circuses. Keep people fed and entertained and you control them without them noticing. We just updated the interface. The bread became free content. The circuses became infinite scroll. The control became data-driven behavioural targeting so precise it could move elections, radicalise teenagers, and direct desire itself.

This did not happen in spite of human-centred design. It happened, in part, because of it. Because we optimised relentlessly for the user’s immediate experience without ever asking what the cumulative effect of that experience was on the human being living it. On the citizen. On the society.

The second generation asks a different question

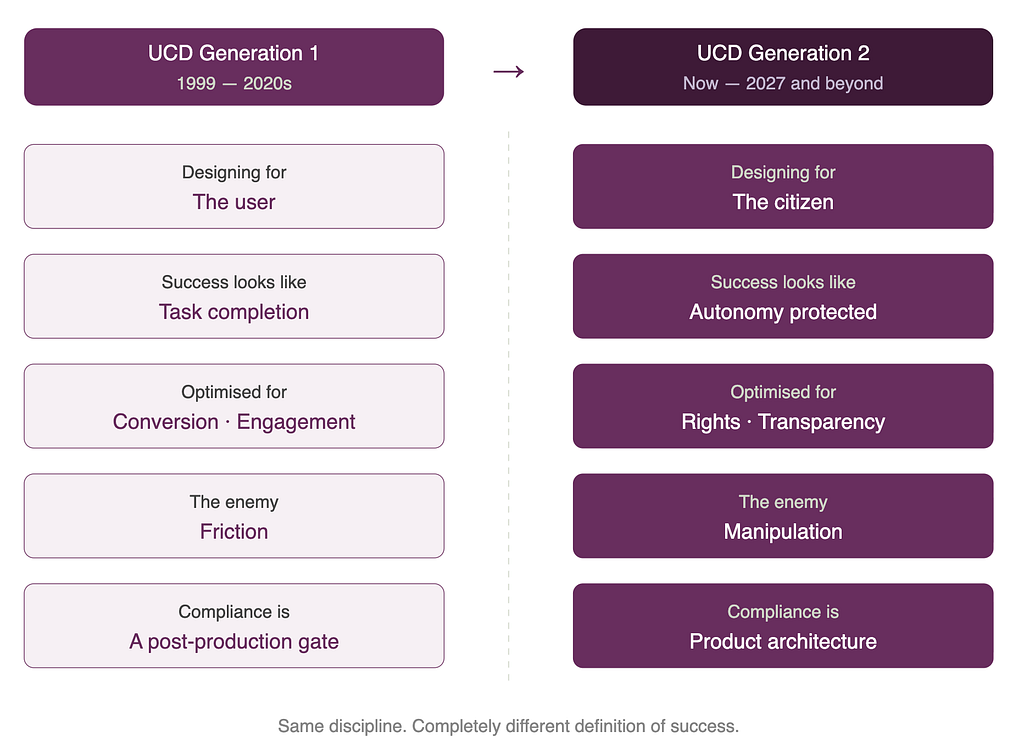

The first generation of human-centred design asked: does this work for the user?

The second generation asks: does this work for the human being, the citizen, and the society we/they live in?

These are not the same question. And the gap between them is where twenty-five years of harm accumulated.

The European Union has spent the last decade building a regulatory framework that is, at its core, an attempt to answer that second question at societal scale. It is imperfect, complex, sometimes maddening in its bureaucratic weight. But its underlying premise is correct: that technology operating at scale cannot be governed only by individual user preferences, because individuals interacting with systems they cannot see, understand, or contest are not making fully free choices. They are being managed.

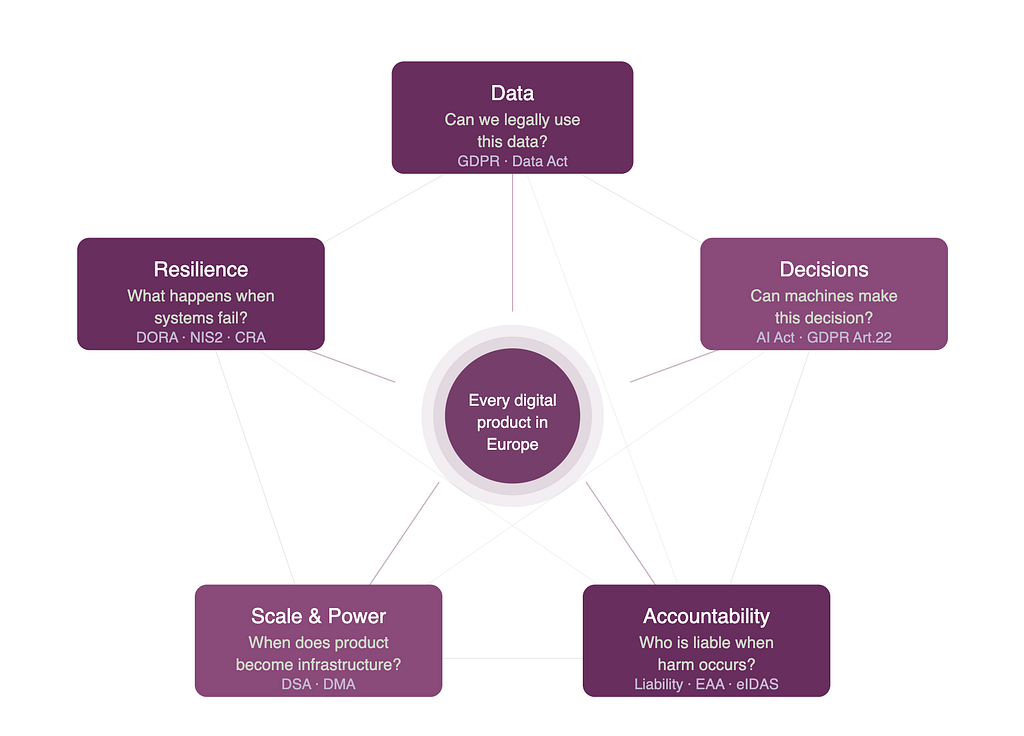

The Digital Services Act is now treating dark patterns — those manipulative interface tricks designed to override your intentions — not as clever UX but as violations. The European Parliament’s own research describes them as techniques that “influence consumers to make decisions they would not have taken otherwise.” Practices that “materially distort or impair the ability of recipients of the service to make autonomous and informed choices.” The Commission has already moved against TikTok and Meta under DSA transparency obligations. Manipulation, under European law, is becoming a crime.

The AI Act goes further. It prohibits subliminal techniques, purposefully deceptive AI systems, and the exploitation of vulnerabilities based on age, disability, or economic situation. It requires human oversight, responsibility. and accountability mechanisms that can be inspected rather than merely asserted. It is why compliance in Europe no longer sits neatly inside legal review at the end of delivery. It now reaches into product decisions, interface patterns, documentation, governance, and operations.

And these are not isolated regulations. They cluster around five compounding dimensions that now define the compliance architecture of every digital product operating in Europe: data, decisions, resilience, scale & power, and accountability. Every product feature activates new regulatory layers. Obligations stack — they do not replace each other. Risk compounds as products scale. And compliance cannot be retrofitted after the fact.

This is not restriction dressed up as protection. This is the infrastructure of a society that has decided its citizens are not commodities.

Design is no longer about the user. It is about the human being

The shift is not incremental. It is a redefinition of what design is for.

For a quarter of a century, we measured design success by task completion rates, satisfaction scores, conversion funnels, and NPS. These are not wrong metrics. But they are incomplete ones, because they measure the transaction and miss the transformation. They measure whether the user got what they came for, not whether the system that delivered it left them more or less autonomous, more or less informed, more or less capable of making genuinely free choices in the future.

The regulatory frameworks now being enforced across Europe are operationalising a different definition of success. One that asks not just “did the user complete the flow?” but “did the system respect their rights while they did?” Not just “was the experience seamless?” but “was the experience honest?” Not just “did we hit our engagement metrics?” but “did we strengthen or erode the person’s capacity to make autonomous decisions?”

This is human-centred design in its mature form. And it demands skills that most product organisations do not yet have: the ability to read regulation as a design brief, to translate legal obligation into interaction requirement, to build processes where compliant and ethical behaviour is not an additional step but the natural output of how the work flows.

We are not moving from usability to bureaucracy. We are moving from designing for the individual user’s immediate experience to designing for the citizen’s fundamental rights. From optimising transactions to protecting the conditions under which genuine human choice is possible.

We are one step deeper

Twenty-five years ago, inaccessible design was an obstacle to a commercial transaction. If you could not complete a checkout flow, you went elsewhere. The harm was contained, the feedback loop was immediate, the consequences were commercial.

Today the stakes are categorically different. The lack of access is not between a user and a product. It is between a citizen and their own decision-making. The manipulation is not a broken button. It is a system designed, at architectural level, to override your intentions, direct your attention, and convert your vulnerability into engagement. To make you less free, incrementally, invisibly, by design.

We have never seen wealth this concentrated, or ideology this polarised, or attention this commodified. And we built the infrastructure that made it possible, one frictionless interface at a time.

The good news — and there is good news — is that the tools to build differently now exist. The regulatory frameworks are being enforced. The definitions of harm are becoming clearer. The accountability mechanisms are becoming real. The question is whether the design and product community will recognise these frameworks for what they are: not external constraints to be minimised, but the design brief of the next era.

What this means for anyone who builds things

If you work in product, design, or technology leadership, the second generation of human-centred design is not optional. It is the context you are operating in, whether you have registered it or not.

It means that your research practice needs to account for citizen rights, not just user preferences. That your design system needs to encode accessibility and transparency, not as a compliance checklist but as a foundational constraint. That your product governance needs to ask, at every stage, not just “does this work?” but “does this respect?” That your definition of done includes audit-ready evidence that the right things happened in the right way.

It means building the kind of structures that make ethical behaviour the path of least resistance, not an additional cognitive load on already stretched teams. It means treating regulation not as the enemy of creativity but as the framework within which creativity becomes trustworthy.

It means, finally, asking the question that usability never asked: not “can the user complete the task?” but “does completing the task leave the human being more or less free?”

That is the design question of our moment. And answering it well may be the most important professional challenge our field now faces.

Author’s Note: The thinking, research, and arguments in this article are entirely human. AI reviewed form and structure only.

Patrizia Bertini works at the intersection of human-centred design, data governance, and regulatory strategy, and is the founder of Euler.

Human-Centred Design has grown up. It’s time we did too. was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.