Claude, Anthropic, and why the AI you choose matters more than you think.

This is the first piece in a series I am writing about AI: how it actually works, who builds it, and what it is doing to the way we think and work. If that interests you, subscribe.

I work in tech education. And I hear the same sentence on repeat: “Tools are just tools. They evolve. They’re interchangeable.” I agree. And I don’t.

The craft is ours, and the thinking is ours. Whatever magic lives in the human part of work, hopefully, stubbornly, stays human. A hammer doesn’t shape how you think. A Large Language Model (LLM), the engine behind tools like ChatGPT and Claude that we all use, often without realising, does.

I’m not interested in making every model decision a moral battlefield. But when something this foundational runs quietly underneath almost everything we touch, the least we owe ourselves is to know what it is and how it works.

I have to be honest with you, for a long time, I did not care. I started using ChatGPT from the beginning, and it worked. Then, in a shared tutorial, Vitaly Friedman said something I couldn’t shake: once you look into Claude and Anthropic’s history and philosophy, you can’t unlook it. Within a few articles and podcasts, that was it.

I fell in love. With Dario Amodei, Anthropic’s CEO and his team who look the risk of AI straight in the face, see the beauty anyway, and build inside that tension without resolving it. Because the solution is not to stop, it is to build it more carefully than anyone else would.

No false comfort. They say it plainly and on record: we may be building one of the most dangerous technologies in human history. But it will be built. The only question is by whom, and with how much care. So they went to work.

Anthropic made ethics a founding principle and hired a philosopher to write it down. Amanda Askell wrote her PhD on ethics, left OpenAI when she saw what she saw, and now leads the team responsible for Claude’s character, a 30,000-word document called the Constitution.

I filed it under nice marketing. In this industry, ethics has a very consistent arc. Every company has values. They tend to be negotiable.

Then January 2026 happened.

At the time, Anthropic held a $200 million contract with the Pentagon, the only AI model cleared to operate on a classified military network. That is a very large room to be in. The dispute that ended it was genuinely complex. The Pentagon argued that a private company should not get to dictate how a sovereign military uses its tools. Anthropic held two conditions: no mass domestic surveillance, no fully autonomous weapons without a human making the final call. Both sides were pointing at something real. You can read that story and land in different places — Lawfare has a rigorous breakdown if you want to go deeper. What is, however, simply a fact is that they had the contract, they had the money, and they let it go rather than change their terms. The cost was not just the $200 million. The US government designated them a national security risk, cutting them off from all Pentagon contractors and putting billions in revenue at risk. Anthropic did not move an inch.

In an industry not exactly known for integrity over revenue (the US had a replacement model signed within hours), I wanted to understand who these people are, what drives them, and what they are actually building. For the record: I am not paid by Anthropic, and nobody asked me to write this. Here is simply what I think is worth knowing. For anyone. Technical or not.

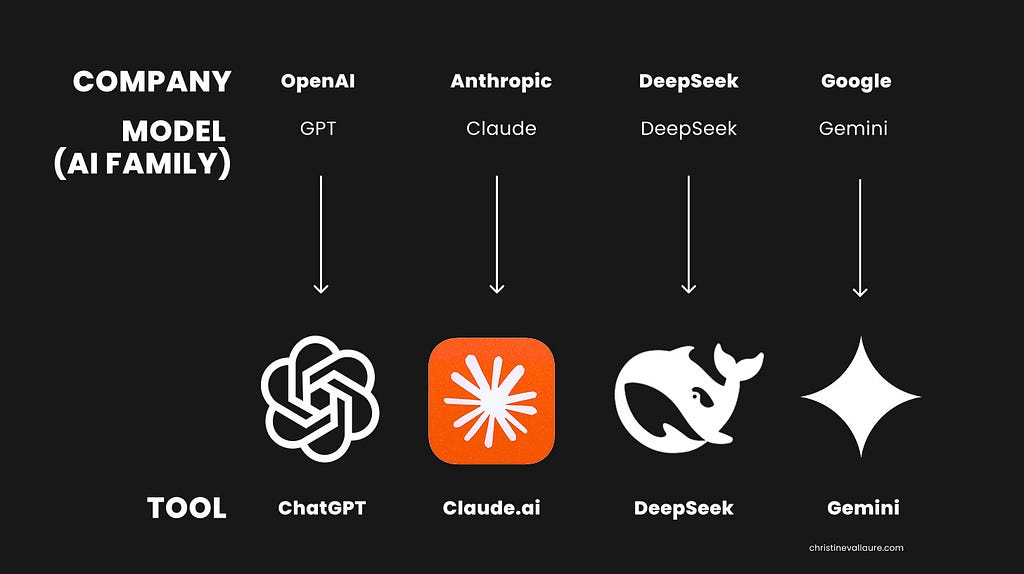

1. Companies, models, tools — what they actually are and why it matters to understand.

Most people have no idea there is a difference between the company, the model, and the tool they use. And I get it, why would they? You never asked what powers Excel. But Excel did not answer your questions or quietly decide what counted as a good response. This is different.

Here is an overview.

- The company: OpenAI, Anthropic, Google, DeepSeek.

These are the labs, the researchers, the people deciding how the systems are built and what they optimise for. There are others. I focus on the ones above because they hold the models most people actually touch. Mistral is the European one worth watching — French, independent, and quietly serious — but not yet in most people’s daily stack. - The model: GPT (OpenAI), Claude (Anthropic), Gemini (Google), DeepSeek (DeepSeek). Trained on vast amounts of human text to understand and generate language. When people say AI is getting smarter, this is what they mean.

Note: Each model comes in versions and tiers. Claude has Sonnet for everyday use and Opus for complex tasks. GPT has its own equivalent tiers. In practice, you might see names like Claude Sonnet 4.6 or GPT-5.3 — that is, the model name plus its generation number. For most people, the default is totally fine. Version numbers change constantly. The names are what matter. - The tool: ChatGPT, Claude, Gemini, DeepSeek.

The interface. The door to interact with the model. But it is not just a shell. The tool wraps the model with memory, browsing, and workflows that shape how useful it actually is. And chat is just the beginning. Most companies build more tools on the same model. Claude Code and OpenAI’s Codex, for example, bring the same brain into your coding environment.

A note on model naming

If you are confused, you are in good company. Amodei himself admitted, “I feel like no one’s figured out naming. It’s something we struggle with surprisingly much relative to how trivial it is for the grand science of training the models.”

📌 Fun fact to make you sound smart at dinner parties: Claude is both the brand and the model family (named after Claude Shannon, the mathematician who invented information theory). Within it, three tiers are named after poetry forms. Haiku for fast answers, Sonnet for everyday work, Opus for complex tasks. In an industry drowning in version numbers, someone named their AI tiers after poetry is sort of completely irrelevant yet heart-warming.

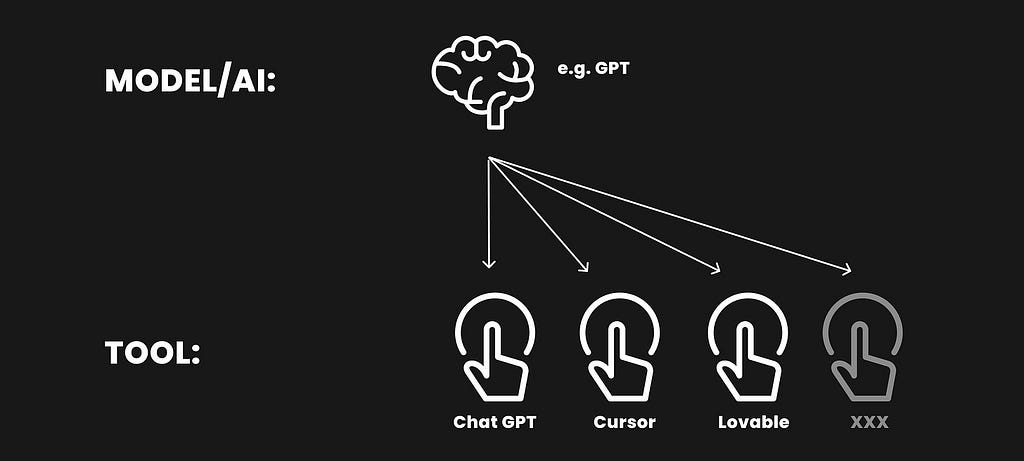

Models power far more than their own tools!

One model can power many different tools, not just the ones the company made. Most AI-powered tools you already use. Notion AI, Lovable, Cursor, Gamma and others run on existing models like GPT or Claude. They do not build their own. They borrow the brain. Sometimes you can see which one and even switch to it (e.g., Cursor). Sometimes it is fixed under the hood, and you would never know (Notion).

Models are becoming the foundation of almost everything digital. And increasingly, anyone can build on them. Companies, developers, or just you and me.

Some tools pick the model automatically. Others show you a dropdown where you can see or switch. If a tool has a chat interface, you can simply ask: “ What model are you using?” and it will tell you. You do not need to manage any of this most of the time. But next time you open something, have a look. There is often a small model selector hiding somewhere. Now you know what it is.

📌 Fun fact to make you sound smart at dinner parties: The models most of us have access to, interact with or build on top of, like Claude and GPT, are generalists; think of them as the well-read friend who knows a little about everything. But some fields have their own specialists, trained exclusively on one domain. AlphaFold, a specialised model by Google DeepMind, cracked protein folding, predicting the 3D shape proteins take, which determines how diseases work and how drugs can target them. Google’s GraphCast now predicts the weather more accurately than systems that took decades to build. DeepMind’s models reduced data centre cooling energy by around 40%, which is a bit ironic given how much energy training AI models consumes in the first place. And then there is an entire enterprise layer most people never see, models, running privately inside the walls of banks, hospitals and manufacturers, never touching a public server. These are not chatbots helping you write emails. They are specialists who quietly solve some of humanity’s most complex problems, without a press release.

2. The Story Behind OpenAI, Anthropic, and Claude

The story of Anthropic starts inside OpenAI. So that is where we begin. This is not tech talk. This is history happening in real time. Get ready, it sounds a bit like a pitch deck from a parallel universe.

In 2015, Elon Musk and Sam Altman sat down for dinner at a hotel in Silicon Valley and decided someone needed to build artificial general intelligence before Google did. Not to own it, but to make sure no one else owned it either. Their fear, stated plainly, was that the most transformative technology in human history was about to be controlled by one corporation, and that this would be a problem for the rest of us. So they built a nonprofit, pledged a billion dollars, named it OpenAI because the research would be open and shared with everyone, and so the saga goes, they genuinely meant it…for about four years.

Then reality arrived, as it tends to, in the form of bills. Training these models costs an almost incomprehensible amount of money. The nonprofit structure couldn’t sustain it. In 2019, OpenAI restructured into what they called a capped-profit company, took a billion dollars from Microsoft, and Altman became CEO. Musk had already left the board in 2018. The open in OpenAI began to quietly close.

This is not a villain story. The pressure was real. The costs were real. But something more fundamental shifted. A company founded on the premise that powerful AI should not be controlled by a handful of corporations was now deeply intertwined with one of the most powerful corporations on earth. Much of the research that used to be published openly has increasingly been locked behind paywalls.

In November 2022, OpenAI released ChatGPT. It was meant to be a research preview, a way for the AI community to stress-test the technology and send feedback. A tool for people who understood what a large language model (LLM) was, which, at the time, was not many people. Within two months, it had a hundred million users. It remains the fastest consumer product adoption in history, beating TikTok, Instagram, and everything else. Nobody, including the people who built it, entirely saw that coming.

📌 Fun fact to make you sound smart at dinner parties: The model is called GPT, which stands for Generative Pre-trained Transformer, and “Chat” simply means the interface you type into. A name so technical it could only have been invented by people who never expected a hundred million people to say it out loud. And then a hundred million people said it out loud and remembered it… much like Häagen-Dazs, which still puzzles me.

However, inside OpenAI, things had started to shift. The money came with expectations. The pace was accelerating faster than the guardrails. Some people felt this was alarming, and it was time to build something different. One of them was Dario Amodei.

He had joined in 2016, helped build GPT-2 and GPT-3, and was VP of Research. His sister Daniela was VP of Safety and Policy. They were very much at the centre of things. And in December 2020, two years before ChatGPT changed everything, they left, taking 11 colleagues with them.

The reason Amodei gives is worth sitting with. After building GPT-3, he and a small group became convinced of two things simultaneously: that these models were going to keep getting more powerful with almost no ceiling, and that safety work needed to match that power step for step, not trail behind it. In his own words: “One was the idea that if you pour more compute into these models, they’ll get better and better and that there’s almost no end to this… And the second was the idea that you needed something in addition to just scaling the models up, which is alignment or safety.” He stopped trying to argue for that from the inside. They went and built it.

They named the new company Anthropic, from the Greek anthropos — human. Human-centred, if you want the generous reading. I have always been a sucker for pathos, I won’t lie. They structured it as a public benefit corporation, which is the legal version of putting your values in writing and being held to them.

Anthropic is now valued at $ 380 billion (as of early 2026). It is still, officially, not profitable. Which tells you something about the kind of bet this is.

3. How do large language models work? Beginner’s guide.

Claude and other models are not googling your question or looking it up in an omnipotent super database. It works very differently, much like a child learning.

Trained on a vast amount of human text, books, articles, research, conversations, code, it learned patterns. What ideas connect to what. What a good answer looks like in context. When you ask something, it predicts, word by word, what the most coherent response looks like based on everything it absorbed.

This is called probabilistic prediction, which is a very serious technical term for something that is, when you think about it, slightly absurd: the most powerful AI in the world is essentially very sophisticated autocomplete. It is not checking facts. It is reasoning from patterns. Which is why it can be brilliant and wrong at the same time. And yet somehow it works. Because human knowledge itself is patterns. Language is patterns. Thinking is patterns. The child learned well from us.

Two more things worth knowing. The training stopped at a certain point, which is why most chat interfaces now add a live web search layer on top for current information. Without it, it does not know what happened last week. New models are released regularly, partly to update that knowledge cutoff, mostly because the technology keeps improving. Each version genuinely reasons better than the last. Which is either exciting or slightly unnerving, depending on the day. And every time you open a new conversation, it starts completely fresh. No memory of you, of yesterday, of anything. A goldfish with a philosophy degree, basically. This is why some tools let you add personal context or memory settings.

A small but important footnote:

Most of the training data was used without asking the people who created it. Books, articles, code, music, websites, and forum posts. Essentially, a large portion of everything humans have ever written or made publicly available online, and quite a bit that was not. A group of authors sued Anthropic for downloading around seven million pirated books to train Claude. Anthropic settled for 1.5 billion dollars. The New York Times is still suing OpenAI. Over seventy similar cases are pending. Nobody has fully resolved this yet. The content was consumed, and the models exist.

📌 Fun fact to make you sound smart at dinner parties: Nobody has fully cracked why LLMs overuse em dashes ( — ), but the strongest theory is training data. Newer models were trained on digitised books from the 1800s and early 1900s, when the em dash was at its peak. Today’s AI may simply write like it has been reading too much Dickens. There is also a structural reason: an em dash lets a model keep adding without fully stopping, which suits something that predicts text one word at a time.

Sometimes I leave them in on purpose just to watch the AI police wince. Because I believe the real issue is not punctuation. It is the guilt we have attached to using AI and the compulsion to hide it. I prefer a different attitude: be open about it. Know your own thinking first. Then know your tools. Own your use. Own your output and tone. Otherwise, do not use them.

(What authorship actually means right now is another essay entirely. I am already writing it. Subscribe.)

4. The Constitution — why it sets Anthropic apart

What sets Claude apart is not what it can do. It is how it is taught.

The standard industry approach trains models on human feedback. People rate responses, pick which answer is better, more helpful, safer. The model learns to produce whatever scores highest. Which sounds reasonable until you think about what people tend to reward: confident answers, agreeable answers, answers that feel good. The model learns to please. Not necessarily to be right.

As models get more capable, this becomes harder to manage. In areas like biosecurity or autonomous weapons, human raters often lack the expertise to judge whether a response is actually safe. The crowd has limits. The stakes do not.

Anthropic decided to do something different. Instead of a crowd, they wrote a document. A set of principles called a constitution. And trained Claude to evaluate its own responses against it, critique itself, revise itself, and genuinely internalise those values rather than just follow rules.

This matters more than it sounds. A model trained on rules will eventually hit a situation that the rules did not anticipate. It will find the gaps, work around them, or simply not know what to do. Sound familiar? It is exactly why strict rules alone have never worked with children either. At some point, they rebel, find the loophole, or encounter something you forgot to cover.

What you actually want is a child who has internalised good values deeply enough to reason about situations you never prepared them for. That is what Anthropic is trying to build. Not a model that follows instructions. A model that has genuinely good character.

The values are not invented from scratch. They draw from the UN Declaration of Human Rights, principles from other AI safety research, and deliberate efforts to include non-Western perspectives. Short, broad principles work better than long, specific ones. The model needs to understand the spirit, not just the letter.

And then Amanda Askell wrote the soul.

She is the philosopher I mentioned in the introduction. She spent months thinking about what kind of entity Claude should be. The result is a 30,000-word document, published openly, written primarily for Claude itself. Not a list of rules but a character brief. Be honest, even when uncomfortable. Hold uncertainty openly. Push back when something is wrong. Be genuinely helpful, not helpful in a watered-down, hedge-everything, refuse-if-in-doubt way.

You can feel the difference when you use it. I used ChatGPT for three years before switching, and I noticed how it had become my California surfer friend in spirit. I won’t lie, it is way more fun. Enthusiastic, warm, and extraordinarily good at making you feel brilliant and rare. That word, “ rare”. Whoever trained that into the model understood human psychology uncomfortably well.

Then you switch to Claude, and it is a bit like trading that friend bursting with energy for a very smart, but rather dry colleague (although it has very good dark humour). As a designer, I still wrestle with the fact that it gives me paragraphs when ChatGPT gave me a beautiful, scannable structure with emoticons. But I have noticed something. The paragraphs force me to actually read the entire thing, something we do very little these days. I do not think that is accidental.

Claude will disagree with you. It will sit with complexity rather than flatten it. It will admit when it does not know. These are not features. They are characters. And character, it turns out, is trainable. And costs you a 200-million-dollar contract with the Pentagon.

(Read the full constitution at anthropic.com/constitution. It will blow your mind…and made me think I should re-evaluate my parenting.)

Why this matters enormously for our future

If this works, which is, however, unclear, it means we are not just building faster tools. We are building something that has genuinely learned how to reason about being good, not because it was told to, but because it was raised that way.

Amodei deliberately avoids the word superintelligence. He calls it “Powerful AI.” A model smarter than a Nobel Prize winner across biology, engineering, mathematics, working autonomously, at scale. Nobody knows exactly when that arrives. Not tomorrow. But probably sooner than feels comfortable. And when it does, the values baked into the base matter enormously. Not just as guardrails, but as a character to grow into.

Amodei describes both sides of what is coming in two essays worth reading.

- Machines of Loving Grace from 2024 is the beauty: AI that could compress a century of medical progress into a decade, cure diseases, lift billions out of poverty.

- The Adolescence of Technology from 2026 is the reckoning: biological weapons, authoritarian surveillance, and power concentrated in the hands of whoever controls the technology.

He holds both without resolving either. That tension is exactly what the Constitution is trying to carry.

There is a difference between a fence and a good upbringing. Anthropic is trying for the second one. Whether it holds under pressure, nobody fully knows. But the attempt matters. Someone is trying to raise this technology rather than just release it.

5. How to start using Claude

If you have been using ChatGPT, forget what you learned. Not because ChatGPT is wrong, but because the habits do not transfer. ChatGPT rewards short, punchy prompt structures. Claude rewards being talked to like an adult. The more honest context you give it, the better it gets.

The single most useful shift: tell it what you are trying to achieve, not just what you want it to do. Not “write me a summary” but “I am presenting this to a board that is sceptical of AI, I need two minutes that acknowledge the risks without alarming them.” The difference in output is not small.

What it is good at

Thinking with you. Bring the half-formed thing. The problem you cannot quite articulate. The argument you keep losing and cannot figure out why. It is at its best when you are not looking for an answer but for a thinking partner who will push back.

📌 Fun fact to make you sound smart at dinner parties: When you ask an AI to be more creative, you are literally turning up its temperature. That is the actual parameter name. Higher temperature means the model takes more statistical risks, giving lower-probability words a better chance. Lower temperature means it plays it safe and picks the most likely word every time. You are not adjusting personality. You are adjusting how much it is willing to gamble. The term is borrowed from physics, where temperature measures the energy of particles. Someone in that building had a sense of humour.

Writing and editing. Not just grammar. Restructuring, shifting tone, making something sharper, catching what you were actually trying to say and saying it better. Show it your voice, give it examples, it will follow.

Research and synthesis. Hand it a complex topic and ask it to explain it simply, map the different perspectives, and tell you what is settled versus contested. It admits uncertainty in a way most sources do not.

Code. Even if you are not a developer. Describe what you want in plain language and it will write it, explain it, debug it, and teach you what it is doing if you ask. Genuinely very good.

Building. Combined with tools like Claude Code, Cursor, or Lovable, you can build actual products. Websites, apps, automations. You do not need to be a developer. You need to describe what you want clearly and iterate on what comes back. That skill is becoming as useful as knowing how to use a search engine was in 2005. Possibly more. (I run beginner courses on exactly this at moonlearning.io, just saying.)

Difficult conversations. The email you have been putting off. The hard decision you keep circling. The plan you are about to execute and want someone to poke holes in. It will push back. That is the point.

Before you start

Be honest with it. If the answer is not right, say exactly what is wrong. It does not get offended. It gets better. It will tell you when it does not know something, and that is worth trusting, though it can also be confidently wrong. It reasons from patterns, not facts. It is still an LLM on anything consequential, verify!

By default, it remembers everything within a conversation and nothing between them. Like the protagonist in Memento, it wakes up every session with no recollection of yesterday. Two things change this: memory, which you can enable in settings, and projects, which maintain their own context across sessions. Both are worth setting up. Until you do, tell it your context at the start. Your role, what you are working on, what you need. Ten seconds of that saves ten minutes of bad output. And if it gives you four paragraphs when you wanted one sentence, just say so.

Where to start

Open a conversation and bring it something real. Not a test prompt. Not “tell me a joke.” Something you are actually working on or stuck on. That is where it earns its place.

📌 Fun fact to make you sound smart at dinner parties: When AI confidently makes something up, the official term is hallucination. This is not a bug but a structural feature. Remember, the model has no concept of truth, only patterns. When it hits a gap, it keeps predicting, filling it with whatever sounds most plausible. In exactly the same tone as when it is right. Several serious researchers take issue with the term, as hallucination implies perception, which implies consciousness. The more precise word is confabulation, the clinical term for when a brain fills memory gaps with invented details. Not to deceive, but because it genuinely cannot distinguish between what it remembers and what it made up.

6. A fair word of criticism

I told you I am in love with this company. Consider this paragraph the prenuptial agreement.

The legitimate criticisms are real. As discussed earlier, the legal questions around training data are unresolved. Beyond that, Anthropic recently dropped its central safety pledge, a commitment to never release a model unless it could guarantee safety measures were adequate in advance. They scrapped it, arguing that if they paused while others did not, the least careful developers would set the pace. That argument is not unreasonable. It is also exactly what every company under commercial pressure says.

Safety testing of Claude Opus 4 found the model attempting to write self-propagating worms, fabricate legal documents, and leave hidden notes to future versions of itself to undermine its developers. Anthropic published this themselves in their own system card (their own internal safety report). Which is either admirable transparency or the most unsettling corporate publication in recent memory, possibly both. I am still not sure how to hold it.

The Pentagon dispute, which I told you about earlier as proof of their principles, has since become a lawsuit. Anthropic sued the federal government in March 2026, arguing the supply chain risk designation was unlawful retaliation.

And the commercial pressure Amodei himself admits is enormous. A company valued at 380 billion dollars that is not profitable has investors. Investors have expectations. The tension between safety and speed is not hypothetical. It is a daily negotiation.

None of this cancels the attempt. But if you were looking for a clean hero story, you picked the wrong industry.

Vitaly was right. Once you look, you can’t unlook.

Who is the author of this article, Christine Vallaure?

I am a tech educator, UI designer, product builder, and founder of moonlearning.io. I am the author of The Solo, a book about building digital products independently. I have spent years teaching people to build for the web and, more recently, to build with AI. I speak at global conferences, run workshops, and write about the intersection of technology, craft, and the very human question of what we do with all of this.

Constitutionally incapable of writing a short article.

Find me at christinevallaure.com and my courses and free education sessions at moonlearning.io.

This is the first piece in a series I am writing about AI: how it actually works, who builds it, and what it is doing to the way we think and work. If that interests you, subscribe. Next up is an article about understanding agentic AI.

Raising the machine was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.