The “phones are listening” conspiracy theory is only half the story. What’s actually happening raises harder questions.

You’re having a conversation with a friend. Out loud, over coffee, nowhere near a keyboard. You mention a brand, a holiday destination, a product you’ve been vaguely thinking about. You don’t search for it. You don’t type it. And then, a few hours later, there it is. An ad. Sitting in your feed like it was waiting for you.

Most people have had this experience. And most people, at some point, have reached the same verdict: the phone was listening.

It’s a tidy explanation. It fits the evidence. It maps onto everything we already half-suspect about Big Tech. And it is, most likely, wrong. At least in the way people tend to mean it. But here’s what makes this story worth telling properly: the version that’s probably true is stranger, and in several respects more troubling, than the one everyone believes.

A very widespread suspicion

Before getting into the research, it’s worth acknowledging how mainstream this belief actually is. This isn’t a fringe conspiracy whispered on Reddit. A 2024 peer-reviewed study by Segijn et al., published in Social Media + Society and surveying over 900 participants across the United States, the Netherlands, and Poland, found that between half and two-thirds of respondents believe electronic eavesdropping is a plausible explanation for receiving ads related to offline conversations. The suspicion was highest in the US, where participants also reported feeling the most unsettled by it. The pattern crossed cultural and regulatory contexts, showing up regardless of how familiar people were with data privacy.

So whatever’s going on here, it is genuinely widespread, and that matters, because beliefs this entrenched shape behaviour, erode trust, and have real consequences for anyone building digital products.

What the studies actually found

The most thorough independent investigation into whether apps covertly use device microphones was conducted by researchers at Northeastern University. They analysed over 17,000 popular Android apps to determine whether any were secretly activating the microphone and transmitting audio to third parties. The result: not one. No unauthorised microphone activations. No hidden audio streams. A separate study by mobile security company Wandera, which focused specifically on high-profile data-hungry apps including Facebook, Instagram, and YouTube, reached the same conclusion.

There’s also a straightforward technical argument against mass audio surveillance. A former Facebook product manager calculated that if smartphones were continuously streaming audio to servers, the data volume would amount to roughly 130 MB per device per day, around 20 petabytes per day across the US alone. That figure approaches Facebook’s entire data storage capacity. It’s worth noting that’s an estimate from one of the company’s own employees, which doesn’t make it wrong, but does make it worth treating as one data point rather than a definitive verdict. Doing it undetected, at scale, would be essentially impossible.

Apple, Google, and Meta have all stated explicitly that device microphones are not used for advertising. Apple settled a lawsuit in 2019 over Siri inadvertently capturing conversations, but emphasised that Siri data has never been used to build marketing profiles. Meta has maintained the same position publicly for years.

So the conspiracy, in its literal form, is not well supported. But this is where the story gets more interesting rather than less.

Where the evidence gets murky

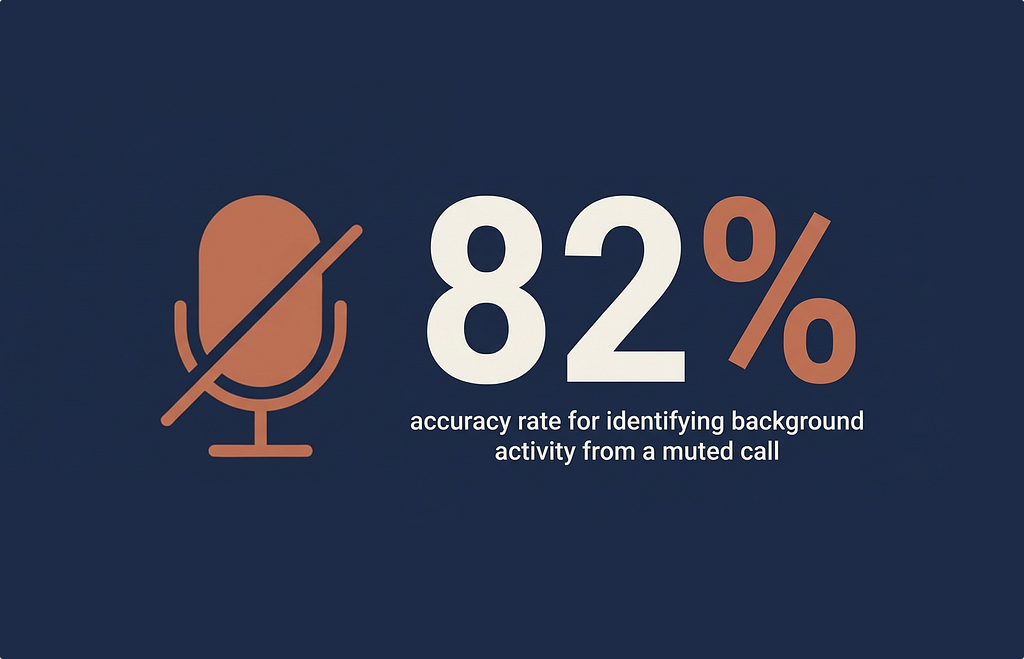

In 2022, researchers from the University of Wisconsin-Madison and Loyola University Chicago published a paper with an uncomfortable finding. Titled “Are You Really Muted?: A Privacy Analysis of Mute Buttons in Video Conferencing Apps,” the study examined ten widely-used video conferencing applications (Zoom, Slack, Microsoft Teams, Google Meet, Cisco Webex, and others) to determine what actually happens when a user hits the mute button.

The answer, it turned out, was not always what users assumed.

All ten of the apps studied had the technical ability to query the microphone even when the user had muted themselves. Most weren’t actively doing so in ways that raised significant concern. But Cisco Webex was the outlier. Unlike the others, Webex was found to be continuously sampling audio from the microphone while users were muted, transmitting statistics to its own servers once per minute. Not recordings of conversations, but audio-derived data: specifically measurements of volume and ambient sound levels.

The researchers then did something revealing with that data. Using a simple machine learning classifier, they were able to use Webex’s telemetry to identify what background activity a user was performing with 82% accuracy, across six categories including typing, cooking, and cleaning. The muted microphone was, in a meaningful sense, still listening.

Cisco responded by saying the data collection was intended to support features like mute notifications and echo cancellation, and updated Webex to stop transmitting microphone telemetry once the findings were made public. The researchers noted they found no evidence of anything “egregious”: audio wasn’t being stored or sold. But the lead author, Professor Kassem Fawaz, flagged something that’s hard to shake. The technology to perform local audio analysis without ever sending data to a server (the engine behind “Hey Siri” and “Hey Google”) is already present in these applications. The capability exists. Whether it is being used is a different question, and one that third parties have limited ability to verify.

Then there’s the Cox Media Group story, which surfaced via investigative outlet 404 Media in late 2023 and gained wider attention in 2024. A leaked pitch deck from CMG Local Solutions described a service it called “Active Listening.”

Using AI to combine voice data with behavioural data, the system claimed to identify “ready-to-buy” consumers based on their spoken intentions and deliver hyper-targeted advertising accordingly. The deck listed Facebook, Google, and Amazon as platform partners.

All three companies denied involvement. Google removed CMG from its partner programme. CMG later clarified that its product did not directly record conversations, but used “voice datasets from third-party providers”: data from voice-to-text features and other apps where users had knowingly shared audio input. The exact mechanism was never independently verified before the programme was reportedly discontinued.

What the CMG story confirmed, regardless of the technical specifics, is that the market for voice-derived data exists. Companies are interested in it. Someone was selling it. That’s not a conspiracy theory; it’s a documented commercial pitch.

None of this proves covert eavesdropping as such. But it does raise a harder question: if voice data is being traded commercially regardless of how it was captured, does the method of collection still matter to the person on the receiving end of it?

The Esade Business School also contributed a small but attention-grabbing study in which 27 participants held scripted ten-minute conversations near phones with microphones activated, discussing topics such as trips to Rome and New York. The result: in 100% of observations, at least one ad related to the conversation topic appeared within five days. The researchers concluded that mobile devices were listening and serving personalised content accordingly. The study has attracted legitimate methodological criticism (27 participants, no control condition, no ability to fully eliminate behavioural data as the explanatory mechanism), and it has not been independently replicated. It is suggestive, not conclusive. But it hasn’t gone away either.

So where does this leave us? No smoking gun. But also, not nothing.

The psychology of the uncanny ad

Even if audio surveillance of this kind isn’t happening, the experience of the uncanny ad (the one that seems to know what you just said) is real and widespread. And the cognitive mechanics behind why people interpret it as listening are worth understanding, because they’re the same mechanics that make this such a persistent belief.

Confirmation bias does a lot of the heavy lifting.

Over the course of a day, we have hundreds of conversations, think about thousands of things, and encounter hundreds of ads. The moments where an ad matches something we said are memorable and striking. The moments where it doesn’t, which are the vast majority, simply don’t register. We are not keeping a running tally. We are pattern-matching, and our brains are very good at finding patterns even when the underlying data doesn’t support them.

Illusory correlation compounds this.

When two things happen in quick succession (a conversation about a product, then an ad for that product) the brain generates a causal explanation. It feels like a connection because it looks like one. The lack of hard evidence doesn’t easily override a feeling that vivid.

There’s also the sheer scale of modern behavioural profiling to consider.

The more precisely ads are targeted, the more frequently genuine coincidences will occur, and the more each one will feel like it couldn’t possibly be random. Paradoxically, better advertising technology produces more moments that feel like surveillance, even when the explanation is rather mundane.

The truth that’s worse than the conspiracy

The literal conspiracy theory has a logistics problem. It requires secretive behaviour, technical infrastructure at impossible scale, and active deception across multiple major corporations simultaneously, all undetected. That’s partly why the independent technical research hasn’t found evidence of it.

What’s actually happening requires none of that. It runs entirely in the open, written into terms of service that nobody reads, and is far more comprehensive than audio capture could ever be.

Your phone knows where you are, at all times, with whom, and for how long. It knows who you called and when, in some cases what was said (via voice-to-text features you’ve consented to). It knows what you’ve purchased, what you’ve browsed, what you’ve hovered over without clicking, what you’ve scrolled past, and at what speed. It knows what time you wake up, when your focus dips, and when you’re most likely to make an impulsive purchase. Through co-location data, it can infer who you socialise with and what those people are interested in. Through proximity to other devices, it can connect your offline behaviour to your online identity.

All of this data feeds into Real-Time Bidding (RTB), a Google-originated system that auctions user profiles to advertisers in milliseconds. According to the Irish Council for Civil Liberties, European citizens receive an average of 376 RTB broadcasts per user, per day. In the United States, that figure is 747. Each broadcast is a data handshake, sharing your inferred interests, location, and browsing context with potential advertisers. In 2021, RTB was a $117 billion industry globally.

The ad that appeared after your conversation about Rome didn’t need your audio. The algorithm had already established, from dozens of other signals, that you are the kind of person who thinks about travelling to Rome: your browsing history, your location near a travel agency, your connection to a friend who’d recently been there, your recent searches for “things to do in Italy.” The conversation was not the cause. It was a coincidence that landed in already-prepared soil.

As one technical analysis put it, algorithms have learned to read between the lines of our digital lives so thoroughly that eavesdropping has become unnecessary. And this, arguably, is the part that should give us pause. Not because it’s secret, but because it isn’t.

Where design enters the picture

At this point the story might feel like it belongs to regulators or journalists. But there’s a design dimension here that deserves more attention than it typically gets.

The Segijn et al. study didn’t just document the prevalence of the “phones listening” belief. It also found that people who attributed a conversation-related ad to eavesdropping were more unsettled by it than those who shrugged it off. The perceived surveillance triggered what researchers describe as a “dataveillance” response: a heightened sense of being watched, combined with a violation of what felt like a private boundary.

The brand being advertised was affected too. These ads caused enough discomfort that they negatively impacted the perception of the advertised product. The targeting that was meant to feel like relevance was landing as an intrusion.

This is a design problem as much as a privacy problem. When personalisation becomes precise enough to feel uncanny, it doesn’t feel like a feature. It feels like exposure. Users are not responding to what the system is actually doing; they’re responding to what it appears to be doing. And in experience design, that distinction is where the work actually is. A product that feels like it’s watching you will be perceived as surveilling, regardless of the technical reality.

There are also chilling effects to consider, a concept well-documented in surveillance research. When people believe they are being monitored (even if they aren’t), they modify their behaviour: they self-censor, they disengage, they become more guarded in what they say and do around devices. A 2022 study in the Journal of Business Research found that perceived dataveillance reduces user autonomy and increases the likelihood of withdrawal from digital services altogether. The user who felt creeped out by that ad isn’t just less likely to click it. They’re less likely to trust the platform it appeared on.

Product teams investing in personalisation to drive engagement may, at the extreme end, be building the conditions for the opposite. This is not an argument against personalisation. It is an argument for designing personalisation with its psychological footprint in mind.

The system will see you now

The “phones are listening” belief persists not because people are irrational, but because the inference is reasonable given the available evidence. The experience of receiving an eerily relevant ad really does happen. The technology that could explain it, ambient audio monitoring, really does exist inside the devices we carry. The companies involved have, in documented cases, pushed closer to that boundary than they’ve publicly admitted: a muted microphone that wasn’t fully muted, a marketing firm pitching voice data as a commercial product.

That the most likely explanation is actually algorithmic profiling from behavioural data (legal, disclosed technically, and running at a scale most people don’t fully appreciate) is not straightforwardly reassuring. It’s a different kind of problem.

Both sides of this debate tend to miss the same question. What does it mean to build systems so accurate in their inferences that users cannot meaningfully distinguish them from surveillance? And once you’ve built that, who is responsible for how it feels to be on the receiving end?

Those are not rhetorical questions. They are ones the industry is going to have to answer, one way or another, whether the phones are listening or not.

Thanks for reading! 📖

If you enjoyed this, follow me on Medium for more on the psychology of design and technology.

References & Credits

García-Martínez, A. (2017). Facebook’s not listening through your phone. It doesn’t have to. Wired. https://www.wired.com/story/facebooks-listening-smartphone-microphone/

The Brainy Insights. (2022). Real-time bidding market size, growth and forecast to 2032. https://www.thebrainyinsights.com/report/real-time-bidding-market-12845

Wandera. (2019). Is your phone listening to you? https://www.wandera.com/phone-listening/

Segijn, C. M., Strycharz, J., Turner, A., & Opree, S. J. (2024). Conversation-related advertising and electronic eavesdropping: Mapping perceptions of phones listening for advertising in the United States, the Netherlands, and Poland. Social Media + Society. https://journals.sagepub.com/doi/10.1177/20563051241288448

Yang, Y., West, J., Thiruvathukal, G. K., Klingensmith, N., & Fawaz, K. (2022). Are you really muted?: A privacy analysis of mute buttons in video conferencing apps. Proceedings on Privacy Enhancing Technologies Symposium. https://wiscprivacy.com/papers/vca_mute.pdf

Hill, K. (2018). These academics spent the last year testing whether your phone is secretly listening to you. Gizmodo. https://gizmodo.com/these-academics-spent-the-last-year-testing-whether-you-1826961188

López-López, D. et al. (2023). Is your phone listening to you to sell personalized ads? Esade Do Better. https://dobetter.esade.edu/en/phone-listening-personalized-ads

Cox, B. (2024). Is your phone really listening to you? Here’s what we know. Newsweek. https://www.newsweek.com/phone-voice-assistants-active-listening-consent-targeted-ads-1949251

Strycharz, J., & Segijn, C. M. (2022). The ethical side-effect of dataveillance: The impact of data collection, trust, and privacy concerns on chilling effects in the context of regulatory differences. Journal of Business Research, 173, 114490. https://doi.org/10.1016/j.jbusres.2023.114490

Irish Council for Civil Liberties. (2022). ICCL report on the scale of Real-Time Bidding data broadcasts in the U.S. and Europe. https://www.iccl.ie/news/iccl-report-on-the-scale-of-real-time-bidding-data-broadcasts-in-the-u-s-and-europe/

Your phone isn’t eavesdropping. The reality is stranger. was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.