The digital assembly line: Strategic orchestration and the industrialisation of user experience

Whether you’re a designer, developer, or manager building or launching software and applications — you’re a victim of the AI and factory model dilemma.

The digital design and development landscape is currently experiencing a significant transition, moving away from artisanal, manual craftsmanship toward a hyper-efficient, automated paradigm often characterised as the factory model.

In a world where generative artificial intelligence (AI) can produce functional frontend code and sophisticated user interface (UI) layouts in minutes rather than days, the fundamental metrics of agency success — speed, cost, and quality — are being radically redefined.

This transformation is not merely a quantitative increase in output but a qualitative shift in the professional identity of the designer, who is moving from a primary role as a “creator” to a high-level role as an “orchestrator” of autonomous systems.

The adoption of a factory model in design agencies offers significant potential for profitability and shipment speed, yet it carries profound risks regarding brand homogenisation, technical debt, and the erosion of human-centered empathy.

The third age of software engineering

The move toward a factory model is best understood through the historical arc of software abstraction, a progression that has consistently aimed to raise the level at which humans interact with machines. This history is characterized by a shift from manipulating bits and instructions to managing functions, objects, services, and eventually, entire distributed systems. Each jump in this stack has expanded the population of builders while increasing individual productivity.

Currently, the industry is entering what is described as software’s “third age,” a golden era defined by the transition from writing instructions to defining intent.

In this age, the center of gravity has moved from the manual execution of code to the orchestration of systems that write code autonomously.

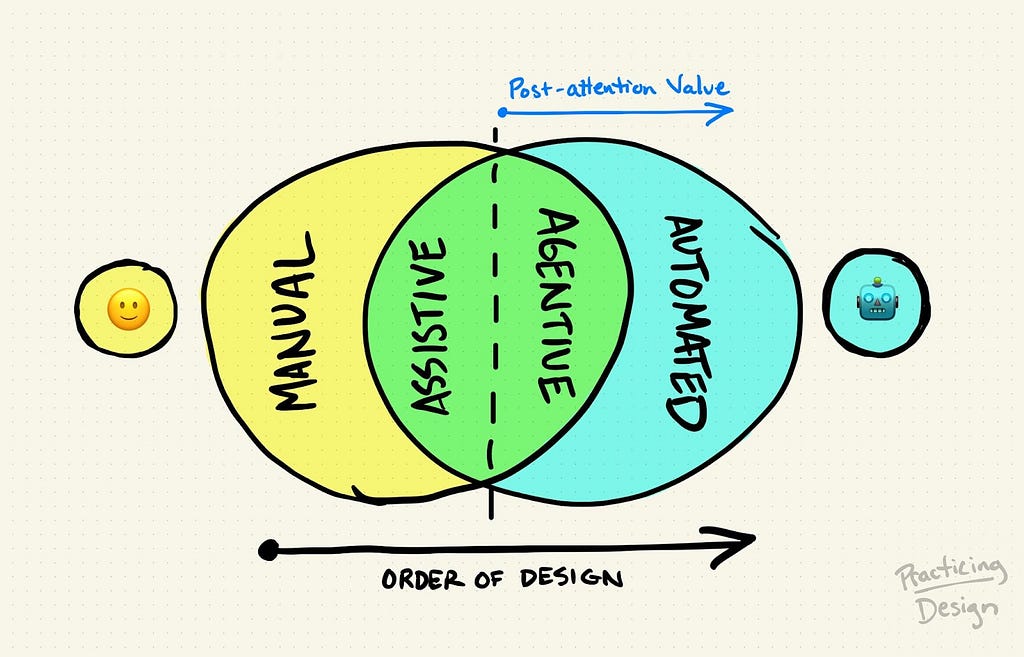

For design agencies, this evolution manifests in three distinct generations of AI integration.

The first generation focused on “accelerated autocomplete,” saving keystrokes on repetitive patterns and reducing friction within existing workflows.

The second generation introduced “synchronous agents,” where designers described tasks in natural language and reviewed AI-generated outputs in real-time.

The third and current generation involves “autonomous agents” or “droids” that can take a high-level specification and independently execute tasks such as setting up environments, writing tests, researching solutions, and producing deployable artefacts.

This shift allows a single highly skilled engineer to manage the output of twenty or thirty parallel agents, fundamentally altering the economics of agency operations.

Technology acceptance and satisfaction

To determine whether an agency should adopt a one-size-fits-all factory model, one must examine the psychological and economic factors that drive the acceptance and satisfaction of AI-driven solutions.

The Technology Acceptance Model (TAM) provides a critical lens for this analysis, positing that adoption is primarily driven by “perceived usefulness” (PU) and “perceived ease of use” (PEOU). In organisational settings, top management support is the primary catalyst that enhances technology acceptance, while PU remains the core factor driving long-term adoption. For a factory model to succeed, the AI tools must not only be efficient but must also demonstrate measurable improvement in decision-making and performance.

The Kano Model further elucidates how specific AI-driven features contribute to user satisfaction. It distinguishes between “threshold” attributes (the basic price of entry), “one-dimensional” attributes (performance-linked satisfaction), and “attractive” attributes (delighters that create competitive advantage).

A lot of research indicates that the hierarchy of user needs for AI-generated content (AIGC) services is becoming increasingly structured. For instance, data privacy and security are categorized as “must-be” attributes with high “worse” coefficients, meaning their failure destroys trust regardless of other capabilities. Conversely, features like “novel hypothesis brainstorming” are high-value “attractive” attributes that can distinguish an agency in a crowded market. A factory model that prioritizes a “one-size-fits-all” approach risks fulfilling only the “must-be” and “one-dimensional” needs, failing to provide the “delighters” that high-end clients demand.

Quantitative analysis of AI service demands

Using the Kano Model’s better-worse coefficients, researchers have identified specific areas where AI-driven automation provides the greatest leverage for user satisfaction.

This data suggests that while users value the speed of summarisation (a performance factor), they are most delighted by the AI’s ability to generate new directions and discover niche insights. Agencies adopting a factory model must ensure that their automated workflows do not just replicate existing data but also facilitate this “delightful” creative discovery.

The cognitive science of automated UI design

The rapid generation of multiple prototypes is a core feature of the factory model, yet the effectiveness of these prototypes must be evaluated against established principles of human-computer interaction (HCI).

Two critical laws — Hick’s Law and Fitts’s Law — govern how users navigate and make decisions within a digital interface.

Hick’s Law and choice overload

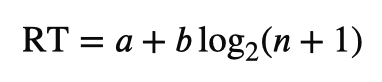

Hick’s Law states that the time it takes for a person to make a decision increases with the number and complexity of choices available. The mathematical formulation is,

where RT is the reaction time, n is the number of choices, and a and b are empirical constants.

In an AI-driven factory model, the ease of creating “multiple prototypes” can lead to the “paradox of choice”.

If designers generate dozens of variations without a clear strategic filter, they may suffer from “analysis paralysis,” delaying shipment and increasing cognitive load for both the team and the end-user.

Effective AI integration should therefore focus on “progressive disclosure,” showing only necessary information and reducing the selection options to the essentials.

Fitts’s Law and movement accuracy

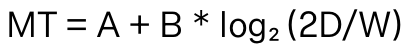

Fitts’s Law describes the relationship between the size of a target and its distance, stating that larger and closer targets are easier to acquire.

- MT, or movement time, is the time it takes to move to a target.

- A and B are constants that change based on the input device (like a mouse cursor or a finger) and reaction time.

- D is the distance to the target.

- W is the width of the target.

- Log₂ is a logarithmic function.

AI-generated layouts frequently struggle with these ergonomic considerations. For example, a “build-from-scratch” AI generator might place primary action buttons at the top of a smartphone screen (high distance for the thumb) or create buttons that are too small for touch accuracy. Agencies must therefore move beyond simple layout generation and evaluate AI outputs against these motor-skill models to ensure usability.

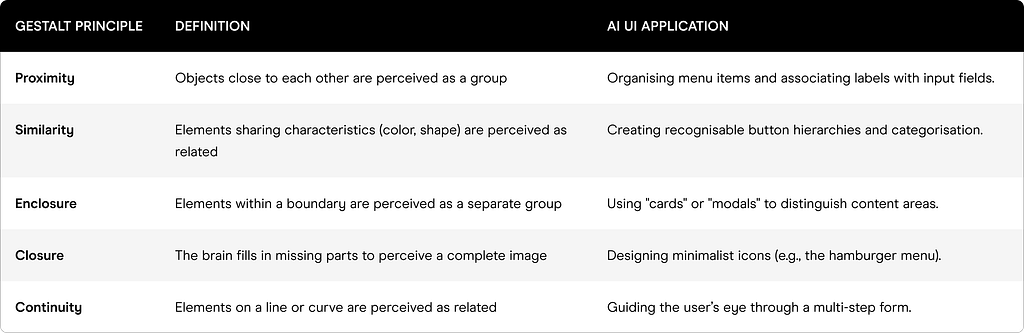

Gestalt principles and visual organisation

The evaluation of AI-generated UI also requires adherence to Gestalt Principles, which describe,

How humans perceive and organize visual information into cohesive wholes.

AI tools often follow “patterns” learned from training data, but they may miss the subtle nuance required for high-level visual coherence.

Current research suggests that the interaction between size and distance (similarity and proximity) significantly influences the perception of visual hierarchy. While AI can quickly suggest layouts, the “real choices” regarding what actually works for a human user must still come from a designer who understands these psychological mediators.

Redefining the Double Diamond: the shift to living systems

The traditional Double Diamond process — Discover, Define, Develop, and Deliver — is being reshaped by AI into a more proactive and evidence-based framework. In 2025 and 2026, the focus has shifted from “whiteboard thinking” to “proactive experience planning,” where products are treated as living systems that learn and adapt even before they are fully launched.

Phase 1: Discover — Behavioural evidence over gut instinct

The first diamond is centered on understanding the right problem. Traditionally, this required weeks of user interviews and surveys. AI now provides teams with access to real user behaviours at scale, identifying “hesitation points,” “dead taps,” and “repeated exits” from session recordings before a redesign even begins. This reduces “UX risk” by providing clarity on what moves the numbers before resources are committed to build.

Phase 2: Define — Strategic prioritisation and intent signals

The insight gathered in discovery helps define the challenge in new ways. AI-backed tools make “patterns and intents” visible to the whole team — including PMs and founders — without requiring heavy reporting from specialised analysts. This speeds up alignment and reduces the “back-and-forth” typical of manual research cycles. By clustering usage patterns and click depth, AI helps roadmaps move from being driven by assumptions to being driven by real demand.

Phase 3: Develop — AI design sprints and agentic readiness

The second diamond encourages giving different answers to the defined problem. This is where the “factory model” of rapid prototyping is most pronounced. AI Design Sprints are now being used to turn framed opportunities into tested solutions in as little as four days.

- Day 1: Understand and Define (using AI friction heat maps).

- Day 2: Ideate and Decide (mapping AI interventions).

- Day 3: Prototype and Test (generating multiple variations).

- Day 4: Iterate and Test Again (learning from real user interactions).

Phase 4: Deliver — Small-scale testing and continuous optimisation

Delivery involves testing solutions at small scales and improving those that work. In the AI world, this phase never truly ends. “Adaptive interfaces” can change their navigation or content based on individual usage patterns in real-time. This “systemic intelligence” allows agencies to deploy websites that are pre-optimized for conversions rather than relying on post-launch trial and error.

Pros and Cons of the factory model in design agencies

The decision to adopt a factory model is a strategic one, balancing the need for immediate shipment speed with the long-term stability and unique value of the agency’s work.

Profitability perspective

From a profitability standpoint, the factory model offers several advantages.

- It acts as a “multiplier of output,” allowing a single senior designer to orchestrate a volume of work that previously required a large team, thus increasing the output per billable hour.

- It also reduces overhead by automating “mechanical toil” — the repetitive tasks like asset resizing, boilerplate coding, and initial wireframing.

- Agencies that adopt AI-driven workflow optimisation have reported a 30–50% boost in customer satisfaction and a 40–70% reduction in cycle times.

Innovation and quality perspective

However, the “cost-cutting” aspect of the factory model can be a double-edged sword for innovation. While it allows for faster experimentation, it can also lead to “regression of unique problem solving”. AI agents tend to optimise for “safe” or “statistically likely” solutions found in their training data, which can lead to “unoriginal” code and “conceptually hollow” designs.

A significant risk is “premature alignment,” where teams rally around the first tangible AI-generated direction because it looks polished and “thought through,” even if the underlying logic is shallow.

This “illusion of objectivity” can cause stakeholders to stop questioning decisions, as the neutral tool appears to have “done the thinking”.

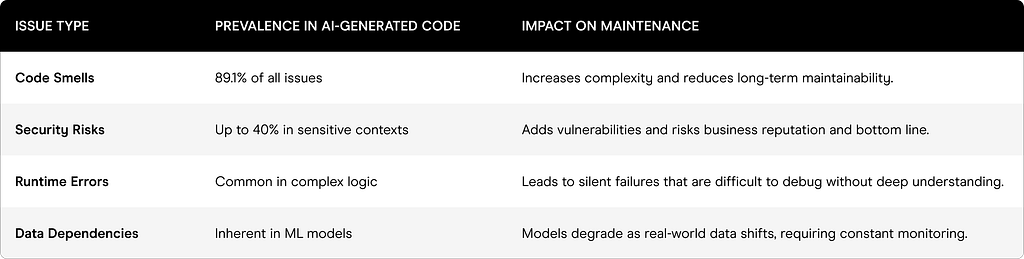

Technical debt and the “vibe, then verify” culture

The shift to an AI-driven factory model in frontend development has introduced a “great toil shift,” where old burdens like manual documentation are replaced by new burdens like correcting AI-generated code.

1. Trust gap and code quality

- While 93% of developers cite measurable benefits from AI, 88% also report negative impacts on technical debt.

- A major concern is that 53% of developers report AI generates code that “appears correct yet introduces hidden defects”.

- In real-world projects, more than 15% of commits from AI assistants introduce at least one issue, and 24.2% of these issues survive in the latest revisions of repositories as accumulated technical debt.

2. Verification strategies: Beyond manual review

To manage this, teams must move away from manually reviewing every line — which does not scale — and instead adopt automated “quality assurance” tools. The “vibe, then verify” culture encourages designers and developers to “vibe” (experiment and generate ideas boldly) while ensuring they “verify” (use static analysis and deterministic rules-based reviews). This “deterministic guardrail” is essential for mitigating the tendency of large language models (LLMs) to hallucinate incorrect code.

3. Brand homogenisation and the ethics of agentic AI

As design agencies move toward a factory model, the risk of “brand homogenisation” becomes acute. Because GenAI platforms compress concept development cycles, they often produce identities that are visually competent but lack the “emotional nuance” and “strategic foundations” of human-led work.

4. Risk to authorship

Early adoption of AI in branding reveals a “diminishing sense of personal ownership” among designers. When tools like Looka or Brandmark are used to automate logo variations, the designer’s role shifts from “author” to “curator,” training them to react rather than decide.

Over time, this can erode the human judgment necessary to distinguish truly “good” design from “merely acceptable” design.

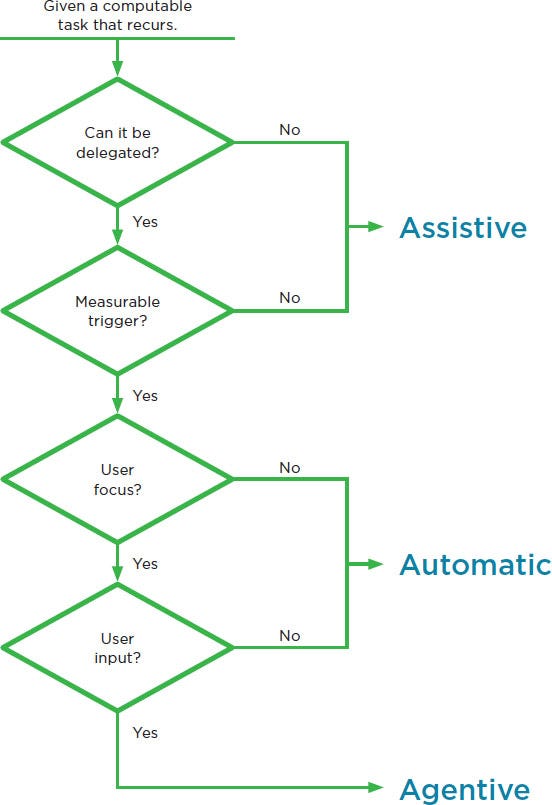

5. Ethical concerns in agentic UX

The introduction of “agentic AI” — systems that act autonomously on behalf of users — creates new ethical challenges for UX researchers.

- Loss of Control: Users shift from being “drivers” to “passengers,” which can create psychological tension if agents move funds or make choices the user doesn’t understand.

- Contextual Empathy Failure: Agents might achieve a technical goal (e.g., maximising savings) but fail to understand human context like risk tolerance or emotional anxiety.

- Black Box Anxiety: Lack of transparency in AI reasoning can lead to “automation bias” (blind trust) or “automation rejection”.

Strategic designers must therefore act as “ethical arbitrators,” identifying “critical transparency moments” where the agent shows its work to maintain user control.

The way forward: From factory operator to strategic orchestrator

The ultimate question is not whether to adopt AI, but how to use it to provide “user-centric solutions” rather than “one-size-fits-all” commodities. The strongest design results in 2026 come from a “hybrid workflow” that blends AI’s efficiency with human creativity and strategic intuition.

Human-AI hybrid operating model

Agencies that will lead the next wave of digital transformation are those that move from “deployment to direction”. In this model, the “designer as orchestrator” handles the judgment calls that no algorithm can replicate: client relationship nuance, brand fit DNA, seasonal context, and supply chain feasibility.

Transitioning to strategic orchestration

To achieve this, agencies should treat user experience as a business strategy, moving beyond execution to focus on revenue models, retention metrics, and organisational goals. This involves:

- Designing for Stakeholders: Recognising that users are only part of the equation and that friction can sometimes be a strategic tool (e.g., Spotify’s calculated friction for free users).

- Refinement Strategy: Using AI to generate “contrasting approaches” to avoid converging prematurely on a solution.

- Agentic Readiness: Building design systems that treat the design language as a programmable data layer, providing structured context to autonomous agents via protocols like the Model Context Protocol (MCP).

Conclusion: Future of the design economy

- The “factory model” is a useful operational strategy for handling the “mechanical weight” of design and frontend production, but it must be subservient to a “strategic orchestration” model that preserves human insight.

- Agencies that prioritise “consistency at velocity” without sacrificing “meaningful connection” will set the benchmark for agility and trust in the 2026 design economy.

- The successful agency of the future will not be a factory where “one size fits all,” but a “boutique journey” platform defined by hyper-personalisation, ethical stewardship, and the expert orchestration of human and machine synergy.

AI, UX, and the factory model was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.