AI Course Creation: Faster, But Smarter?

The newest wave of GenAI tools for learning has made one thing clear: course creation is getting faster.

Anthropic’s Claude Design is positioned as a way to co-create polished visual work such as designs, prototypes, slides, and one-pagers. [1]

Articulate says its AI Assistant can turn prompts or source documents into outlines, drafts, lesson content, quizzes, images, audio, and interactive blocks inside Storyline and Rise. [2]

Easygenerator says its AI can convert documents into courses, generate questions, and translate into more than 75 languages. [3]

iSpring promotes AI-powered interactive course creation, quizzes, images, and translations inside its platform. [4]

Synthesia says it can generate structured training courses, scripts, quizzes, and AI-video-based learning from prompts, documents, and URLs. [5]

Coursebox says it can turn content into lessons, quizzes, videos, and interactive elements in minutes. [6]

Elucidat says its AI can generate outlines built on best-practice learning design. [7]

In other words, the market is moving quickly from AI assistance to AI-led course generation.

That progress is real. It would be foolish to deny it. These AI tools can reduce manual drafting, speed up first-pass structure, lower the barrier to producing training assets, and help teams move faster from source material to something tangible.

Some are strong in visual prototyping. Some are strong in document-to-course conversion. Some are strong in video generation. Some are embedding AI directly into established authoring workflows. The productivity gain is obvious.

Designing eLearning That Drives Performance: The Power Of Self-Regulated Learning In The AI Era

Learners need more than information—they need the skills to manage their own growth and performance. Join this webinar to discover how self-regulated learning strategies and AI can work together to create more effective eLearning experiences.

Course Generation = Instructional Design?

But that is not the whole story.

The real weakness of most of these tools is not that they generate poor-looking output. In fact, they often generate output that looks surprisingly polished. The deeper weakness is that they still treat course creation too much like a production problem and not enough like a judgment problem.

They are very good at helping users produce, convert, draft, and assemble. They are much weaker at supporting the kind of structured human-AI collaboration that good Instructional Design actually requires.

That is the gap.

Because course generation is not the same thing as Instructional Design.

A tool can generate a course outline, quizzes, slides, videos, and interactions. That does not mean it has done the harder work of Instructional Design. It has not necessarily:

- Identified the real performance problem.

- Clarified what learners actually need to do differently.

- Distinguished critical content from background noise.

- Chosen the right learning treatment.

- Built meaningful practice, or pressure-tested whether the assessment truly reflects the objective.

Those are not cosmetic tasks. They are the heart of the profession.

This is where the current market language needs more skepticism. When an eLearning vendor says it can create courses „in minutes“ from URLs, prompts, or scripts, that may be true at the level of output generation. But a course assembled quickly from source content is still not automatically a sound learning solution. Fast assembly is not the same as sound design.

Image by CommLab India

Take the tools themselves on their own terms. Claude Design is described by Anthropic as a collaborative visual design product for prototypes, slides, and related outputs. That is significant. It brings Claude closer to the authoring space. But it is still not positioned by Anthropic as a full enterprise eLearning authoring platform with the governance, packaging, reporting, review, and workflow controls that learning teams depend on.

Articulate AI Assistant is more directly embedded in an authoring environment and can generate course drafts, images, audio, quizzes, and interactive blocks within Rise and Storyline.

Easygenerator emphasizes drag-and-drop authoring, document conversion, scenarios, walkthroughs, translation, and LMS publishing. iSpring emphasizes AI-powered scrollable courses, quizzes, images, and translations. Synthesia emphasizes AI-generated video-based training creation from prompts and documents.

Coursebox emphasizes rapid conversion of content into lessons, quizzes, videos, and interactive elements. Elucidat emphasizes AI-powered outlines and „best-practice learning design“ support. Each of these capabilities is useful. None of them, by itself, solves the deeper problem of instructional judgment.

That is why the central issue is not whether these tools are good or bad. Many of them are clearly useful. The issue is what model of work they are built around.

Most of them are built around some version of this logic: upload, prompt, generate, refine, publish. That is a production workflow.

Instructional Design Workflow

A real Instructional Design workflow is different. It should begin with understanding Subject Matter Expert (SME) content, but not merely summarizing it. It should move into learning flow, but not before clarifying what matters. It should define objectives and assessment together, not as isolated outputs.

It should build storyboard architecture before surface polish. It should deliberately reduce text heaviness without removing necessary meaning. It should use AI not only to generate, but to critique, challenge, and audit. And it should preserve explicit human confirmation at every important stage.

That is where most current tools still fall short. They optimize for speed of creation. They do not yet reliably optimize for quality of human–AI collaboration.

This matters because today’s tools often remove friction at exactly the places where some friction is useful. Good Instructional Design is not built only by moving faster. It is built by wrestling with ambiguity, making distinctions, rejecting weak options, and deciding what kind of learning treatment is actually appropriate.

If the tool keeps stepping in mainly as a content machine, the user can drift into passive acceptance. The work gets done. The design judgment may not get stronger. That is not a small issue. It is the strategic issue.

A lot of today’s AI course-creation tools can help a team produce faster. Much fewer can help the team think better while producing.

That is why I have argued elsewhere for a different model: AI as a thinking partner, not a content machine.

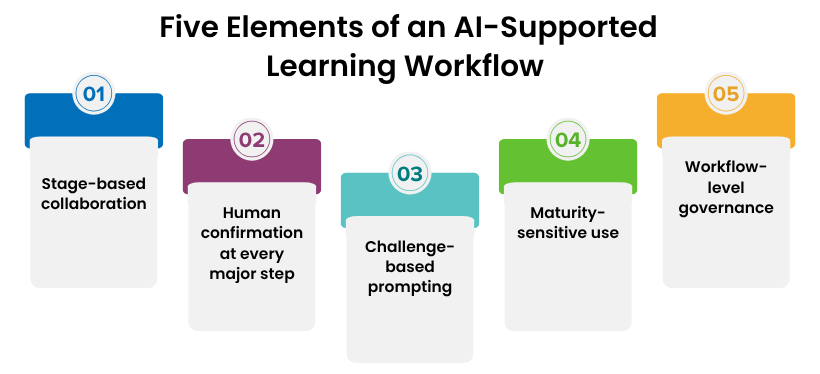

In practical terms, that means the strongest AI-supported learning workflow should include at least five elements.

Image by CommLab India

First, stage-based collaboration. AI should not behave the same way from beginning to end.

- At the SME stage, it should help clarify and simplify.

- At the learning-flow stage, it should help structure options.

- At the learning objective stage, it should help draft and test performance language.

- At the assessment stage, it should challenge alignment, plausibility, and difficulty.

- At the final stage, it should change roles and audit the work as an independent reviewer.

Second, human confirmation at every major step. A system that allows AI-generated outputs to glide straight into deliverables without deliberate review is not improving Instructional Design. It is automating parts of it while hoping quality will survive.

Third, challenge-based prompting. The strongest use of AI is not only supportive. It is adversarial in the right way. The AI should sometimes act as a critic, devil’s advocate, red team, or reviewer. It should ask what is weak, what has been oversimplified, which distractor is implausible, which interaction is decorative, and what assumption is hidden in the learning flow. Most current tools are far stronger at assistance than at disciplined challenge.

Fourth, maturity-sensitive use. Junior IDs, mid-level IDs, and senior IDs should not all use AI in the same way. A junior may need explanation and scaffolding. A mid-level ID may need critique and comparison. A senior ID may need an audit partner. Most platforms do not yet handle that difference intelligently.

Fifth, workflow-level governance. Tool access is not governance. Real governance means deciding where AI helps, where it challenges, where it must not lead interpretation, and how quality will be reviewed. Most platforms talk more about creation speed than about professional review discipline.

This is why I think the current market is both impressive and incomplete.

Impressive, because the tools are genuinely advancing. Claude Design shows how close general-purpose AI is moving toward interactive visual creation. Articulate is embedding AI directly inside mainstream authoring. Easygenerator and iSpring are reducing the effort required to convert source content into publishable learning.

Synthesia is making video-heavy learning much more scalable. Coursebox is reducing the grunt work of basic course assembly. Elucidat is trying to give AI a learning-design role, at least at the outline level. These are real developments.

Incomplete, because the dominant model is still too generation-centric. The tools are getting better at churning out course components. They are not yet equally good at building the disciplined human-AI partnership that stronger Instructional Design demands.

That is the uncomfortable truth beneath the excitement.

Who Ιs Winning?

The future winners in this market will not simply be the tools that generate the fastest first draft. They will be the ones that best support a co-creation workflow in which:

- AI helps clarify, structure, and generate.

- Humans evaluate, justify, and decide.

- AI challenges weak reasoning at the right moments.

- Humans confirm and refine.

- AI audits the final work with fresh distance.

- The workflow itself protects instructional judgment instead of hollowing it out.

That is a very different vision from „turn your docs into a course in minutes.“ And it is the better one.

Because the future of learning will not be improved by automated course churn alone. It will improve when AI is designed to collaborate with human judgment rather than quietly replace the parts of the process where judgment matters most.

That is where most of today’s tools still fall short.

And that is where the next real innovation needs to happen.

References:

[1] Anthropic, Introducing Claude Design by Anthropic Labs (April 17, 2026).

[2] Articulate, Meet your new AI Assistant and What is Articulate AI Assistant?

[3] Easygenerator, product features and authoring pages.

[4] iSpring, product features and LMS pages.

[5] Synthesia, AI Training Course Generator and L&D pages.

[6] Coursebox, home page.

[7] Elucidat, AI-powered course creation pages.